AI Detected My Lung Cancer After Doctors Missed It – Now I’m Alive to Tell the Story

Dianne Covey used to think that artificial intelligence (AI) was “a load of old guff”. The 69-year-old, who lives in a retirement complex in Farncombe, Surrey, couldn’t see the point of technology performing tasks that typically require human intelligence, especially in medical settings . But that changed when she developed a cough late last year.

After going to her GP and being sent for a chest X-ray, Dianne was told the radiographer had given her scan the all clear. But she happened to be visiting the Royal Surrey County Hospital on the day they were installing a revolutionary AI tool: Annalise.ai, which looks for areas of concern in chest and head x-rays, then prioritises cases for urgent review. The scans from that morning were re-analysed by the AI, which flagged Dianne’s as having an anomaly.

She was sent for an emergency CT scan – and diagnosed with stage one lung cancer.

“It was caught very early,” she says, “before it was visible to the naked eye.” She was told it would have taken six months before her cancer would be visible to a radiographer – by which time it could have been much deadlier. Approximately 65 per cent of people diagnosed with stage 1 lung cancer survive five years or more, compared to 5 per cent of those with stage 4, according to Cancer Research UK .

“If it wasn’t for artificial intelligence , it would have just grown and grown inside me, [possibly] until it was too late,” Dianne says. “Artificial intelligence saved my life.”

Thanks to early detection, they were able to completely remove the cancer with surgery before Christmas, without the need for chemotherapy or radiotherapy. She has since fully recovered, and has a CT scan every six months to check it hasn’t returned.

“I’m just so in awe of AI . I’ve been given a new lease of life. I quit smoking, I walk between 10 and 15,000 steps a day and the whole thing has done me good.”

Dianne is just one of many British patients benefiting from the wave of AI tools arriving to detect, diagnose and treat cancers – including on the NHS. This is the result of a concerted investment by both the current and previous governments, who hope it is going to revolutionise our desperately stretched health service .

In October 2023 , the NHS announced a £21m investment in AI tools for lung cancer diagnosis. In May 2024, £15.5m in government funding led to the roll out of AI to help cut waiting times in radiography departments.

This year a world-leading AI trial to tackle breast cancer was launched by the Department of Health and Social Care. In April, Health Secretary Wes Streeting announced the trial of a new, AI-powered blood test to detect cancer, and in May, an AI skin cancer detection system was greenlit for conditional NHS use. Annalise.ai , which was used to diagnose Dianne, has been adopted by over 45 NHS trusts . The belief driving all this is that AI can radically improve the prognosis of cancer patients in the NHS.

For many people, the idea of being treated by a robot over a human might seem unappealing, so how is AI actually being used ? The first and perhaps most important function is to reduce the workload of overstretched staff. AI is far better at pattern recognition than humans, and so can be trained on several thousands of scans to identify and recognise anomalies immediately – including ones that are hard for the human eye to spot.

Mike Jones, advanced practitioner radiographer at Royal Surrey NHS Foundation Trust (where Dianne was treated) explains. “At any given time, there may be hundreds of chest X-rays waiting to be reported. Traditionally, these were read in chronological order which meant that some urgent cases wouldn’t be seen until they had made the top of the queue.” According to the Royal College of Radiologists in 2022, demand for CT and MRI scans grows by five per cent each year and wait lists are lengthening, but the radiology workforce is growing by just three per cent.

“Now, with AI, each X-ray is analysed immediately after it’s taken. The system scans for urgent or critical findings and flags them. These flagged cases are then automatically pushed to the top of the radiology worklist.”

Read Next: Maxine Peake: I’m terrified Reform will get in – we’re all complicit

While the system Surrey is working with specifically focuses on chest and brain x-rays, AI is benefiting patient prioritisation in many other areas. Four British hospitals are using Pi, an AI software developed by Lucida Medical, that analyses MRI scans to help diagnose prostate cancer.

This prioritisation also helps with the unavoidable fact that humans – particularly overworked ones – make mistakes . With an eased workload, the speed with which cancer can be diagnosed or ruled out accelerates, which, as with Dianne, can be critical in determining the prognosis.

This is beneficial particularly for cancers that are both over- and under-diagnosed. Dr Antony Rix, CEO and Co-Founder at Lucida Medical, says that the AI helps navigate the delicate balance of identifying and tackling aggressive cancers, while also avoiding unnecessary and painful treatment.

“Part of the problem with prostate cancer is that the lowest grade of prostate cancer is very common and we actively don’t want to find it,” he says. “About three quarters of men going forward for prostate cancer diagnosis don’t actually have the cancer and we want to avoid biopsies in them as they’re a horrible, costly process.

“But radiologists get tired and it can be very difficult to tell some of the prostate cancers from benign conditions. So we’ve trained AI to be able to tell the difference between ‘nothing to worry about’, aka low risk, and ‘we need to do our biopsy and potentially treat’ aka high risk.”

Crucially, the AI is not replacing the work of the clinician – but rather steering the doctor towards the highest priority cases, and freeing them to spend time on less urgent cases.

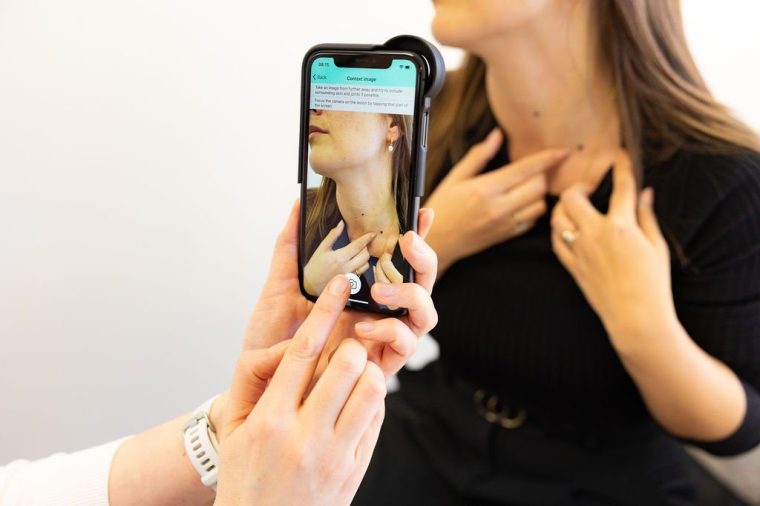

An AI system called DERM, that allows doctors to triage suspected skin cancer referrals using a smartphone, is now being used in 21 NHS trusts. A photo of a suspicious mole or lesion is uploaded to the system and assessed in a matter of minutes, with 99 per cent accuracy.

Dan Mullarkey of Skin Analytics, the company behind it, says the tool has arrived at a time when one in four consultant dermatologist positions in the UK remain unfilled – and over 40 per cent of urgent suspected cancer referral cases turn out to be benign. With the help of AI, “the patient gets that diagnosis and reassurance without needing the dermatologist’s time, and the dermatologist can go back and see the patients who are either waiting for biopsies on their cancer pathways or on the routine backlog.”

He adds that national data shows that one in three patients who turn out to have skin cancer are put on routine referrals and not on the Urgent Suspected cancer referral pathway, meaning they are often missed.

Claire Maymon, a 60-year-old from Worcestershire, ignored a growth on her leg for a while, until it changed shape. She was referred for an appointment where DERM was used to photograph her skin, and that evening she was phoned by a doctor.

“The doctor told me it was cancerous, and it had to go. I was absolutely stunned by a) the speed at which they had got that information and b) how quickly they got it to me. AI cut out a whole layer, both for the patient and for the doctor: I didn’t have to waste the doctor’s time, and the doctor didn’t have to wonder if this was or wasn’t something. I’m a total convert [to using AI].”

Maymon has since been back and used the same service twice, as well as advising her friends to use it too. “Now I’ve got the confidence of knowing it’s very fast in analysing, and also not fighting my conscience about going to the GP with what actually turned out to be a skin tag.”

Despite the success of the AI diagnosis, Maymon’s enthusiasm for AI was not there initially. When she went to have her skin photographed, she thought it was a waste of time. “I almost called the GP on the way home, thinking that I would book in a ‘proper’ appointment with a proper doctor,” she says.

This scepticism is common among patients and doctors when they are first introduced to technologies such as AI. According to The Health Foundation in 2024, around 1 in 6 members of the public and around 1 in 10 NHS staff think that AI will make care quality worse.

Read Next: ‘I’m a Waspi woman with incurable cancer. I want justice before it’s too late’

We know that AI is not fool proof. One major potential problem is around bias. If it is not trained on a truly diverse data set, existing inequalities in health outcomes could become entrenched. Studies have already shown that facial recognition programs are worse at classifying dark skinned women compared to light skinned men – some experts have warned that if AI uses insufficient or biased data, cancers in ethnic minorities could be missed.

“AI has huge potential, but it also has some really, really inherent risks around driving inequality,” says Amy Rylance, assistant director of health improvement at Prostate Cancer UK. “For example, one of the radiologists who’s currently installing AI on their MRIs as part of a clinical trial observed that you need the latest MRI machines for the AI to work. That’s fine in the leading teaching hospitals, but if you look at the NHS generally, we know there’s a lot of very old, really decrepit MRI machines where the AI is not going to work.”

Then there are concerns about accuracy. Despite high standards of training and constant performance monitoring, there is still the possibility of false negatives. Some emerging data suggests that AI and radiologists miss an equal number of some cancers, but miss different types. “The exciting option is that by putting these two together, you can find more cancer,” Dr Rix adds.

This is why AI is going to be a complement, rather than a replacement, for humans, says radiologist Mike Jones.

“The AI isn’t perfect and likely never will be, but it does reliably augment the service. Early data from our site suggests that outcomes are significantly improved when human and AI interpretations are combined.”

Doctors in his trust have responded positively to the rollout of AI.

“Emergency department doctors especially found it really useful as a second pair of eyes, in a busy and challenging environment. It’s not taking over their job, it’s helping them do it better.”

Nikhil Vasdev, a professor of robotic surgery at the University of Hertfordshire, argues that any negatives AI may bring are outweighed by the benefits. “I think AI will help and definitely not hinder us in any way.” The only problem he foresees is that it may lead patients to trust far less reliable forms of AI. “Suppose a patient is diagnosed with cancer and then they ask Chat GPT what treatment they should have – should they have surgery? Radiation treatment? I think that’s where the danger comes up.”

As far as patients are concerned, it couldn’t be rolled out sooner. “AI has come just in the nick of time to help me and my generation deal with the fallout of not being very well prepared for skin cancer in our youth,” Claire concludes. “It’s a brilliant thing.”